A Complete Noobs Guide to Vector Search, Part 2

Searching for Vectors in High Dimensional Spaces

I’m writing a book on Retrieval Augmented Generation (RAG) for Wiley Publishing, and vector databases are an inescapable part of building a performant RAG system. I selected Qdrant as the vector database for my book and this series. Over the last three posts, I’ve taught you everything you need to know to start working with the Qdrant vector database.

What’s in This Series?

A Complete Noobs Guide to Vector Search, Part 1: From Real-World Data to Vectors

A Complete Noobs Guide to Vector Search, Part 2: Searching for Vectors (this blog)

❤️If you find the newsletter and the series helpful, please do me a favour and smash that like button !

You can also help by sharing this with your network (whether via re-stack, tweet, or LinkedIn post) so they can benefit from it, too.

🤗 This small gesture and your support mean far more to me than you can ever imagine!

Let’s get to it!

Vector search is a powerful technique for finding similar points in a massive collection of points by comparing the similarity of their vector representations. It’s an efficient way to retrieve relevant information based on semantic similarity rather than exact keyword matching.

Vector search is like finding a needle in a haystack. But instead of hay, you have vectors; instead of a needle, you have a query vector.

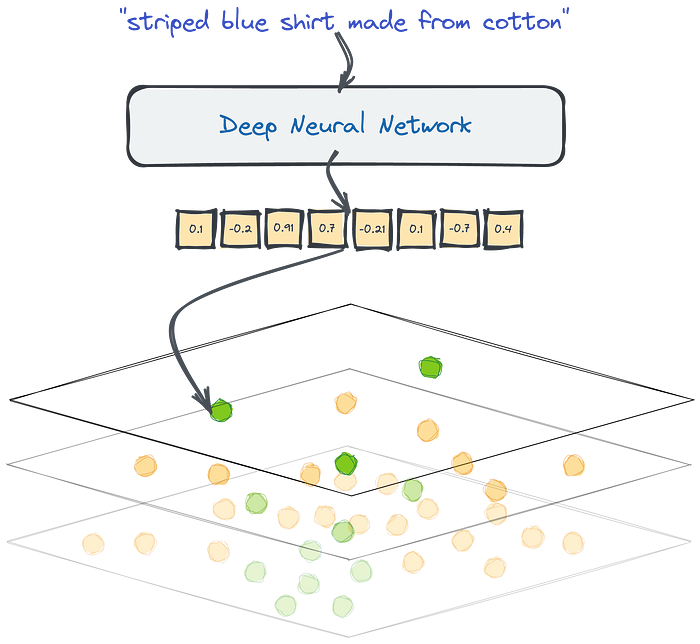

In the previous post in this series, I showed you how to transform text into a vector representation using an embedding model. That vector representation is a point in a multi-dimensional space. Using the search functionality in Qdrant, you’ll learn how to navigate this space to find the points most similar to your query.

Similarity Search: Finding the Closest Neighbors

“Similarity” in this context refers to how close vectors are to each other in this multi-dimensional space.

Vectors that are close together represent items that share similar characteristics or meanings. For example, vectors representing documents about deep learning research would be closer to each other than vectors representing documents about advanced filo pastry baking techniques. But how do you quantify similarity?

This is where metrics come in.

Metrics are equations used to compute distances between vectors. Qdrant lets you choose between similarity metrics like the dot product, cosine similarity, Euclidean Distance, and Manhattan distance. Choosing the appropriate similarity metric depends on the data you’re working with and the specific task. It’s a cop-out answer, but seriously, every question like this in AI always “depends” on something.

However, I’d like to offer some heuristics I’ve learned to help you choose an appropriate similarity metric.

📐 Cosine Similarity

Use cosine similarity when the magnitude of the vectors is not important, but the direction is.

It is commonly used in text similarity tasks like document clustering or information retrieval.

Cosine similarity measures the cosine of the angle between two vectors, ignoring their lengths.

If your data is sparse (i.e., many zero values), cosine similarity or dot product may be more appropriate than Euclidean or Manhattan distance, as they focus on the non-zero dimensions.

Qdrant uses a two-step process to compute cosine similarity for faster search speeds. It normalizes vectors when adding them to the collection and compares them using a fast dot product operation.

🔴 Dot Product

The dot product is similar to cosine similarity but considers the vectors’ magnitude.

It measures the alignment between two vectors and is influenced by their lengths.

The dot product is useful when the magnitude of the vectors carries meaningful information.

It‘s often used in recommendation systems, where the magnitude of user preferences or item ratings is significant.

If your vectors are normalized (i.e., unit vectors), cosine similarity and dot product will yield similar results.

፨ Euclidean Distance

Euclidean Distance measures the straight-line Distance between two vectors in the high-dimensional space.

It is suitable when the absolute differences between vector elements are essential.

Euclidean Distance is commonly used in tasks such as image similarity, where pixel intensities or feature values directly correspond to the visual appearance.

It is sensitive to the scale of the features, so feature normalization or standardization may be necessary.

If your data has varying scales or ranges across dimensions, consider normalizing or standardizing the features before applying similarity metrics like Euclidean or Manhattan distance.

🗽 Manhattan Distance

Manhattan distance, also known as L1 or city block distance, measures the sum of absolute differences between vector elements.

It is useful when the features represent distinct dimensions or attributes that are not necessarily related.

Manhattan distance is less sensitive to outliers compared to Euclidean Distance.

It is often used in tasks such as comparing binary or categorical features, where the presence or absence of certain attributes is more important than their exact values.

Now, I know what you’re thinking.

I mentioned that these metrics are equations that need to be computed. If you’re looking to find the most similar documents to a particular query vector, the standard approach would involve calculating the distance between the query vector and every other vector in the dataset. This method works fine when you have a small collection of points like the 100 we added to our collection in the previous post. However, this presents a challenge if you’re dealing with millions, tens of millions, hundreds of millions, or even billions of data points, which is the case in most real-world production settings.

To tackle this problem, you can implement methods comparable to those used in relational databases. Database indexes are established to speed up queries and prevent full table scanning. Likewise, vector databases use specialized data structures and algorithms to speed up the search process.

Navigating the Vector Space: HNSW and ANN

While similarity metrics provide the “ruler” for measuring distances between vectors, efficiently searching through a massive collection requires a different tool — Hierarchical Navigable Small World (HNSW) graphs.

HNSW is an Approximate Nearest Neighbor (ANN) algorithm for efficient vector search. Qdrant uses HNSW to efficiently find the vectors most similar to a given query vector without explicitly comparing them to all other vectors in the collection.

Picture a network of interconnected points, where each point represents a vector. HNSW hierarchically constructs this network, creating layers of connections between vectors. The search starts at the top layer and progressively moves down the hierarchy, narrowing the search space until the nearest neighbors are found. This technique means you can efficiently navigate the vector space and quickly zoom in on the most likely similar points without visiting every single point. Since it’s an ANN algorithm, it prioritizes speed over absolute precision. Instead of guaranteeing the absolute closest neighbors, it will efficiently find points that are close enough.

The search process is fast using vector databases like Qdrant, which uses ANN algorithms like HNSW. Instead of calculating the distance to every object in the database, you can intelligently select a subset of candidate objects to compare against, reducing the computational overhead. You get the benefit of sublinear search times and pretty good results. It’s like having a map with shortcuts that direct you to the neighborhoods where you’re most likely to find what you’re looking for.

This is exactly what you need when searching for massive scale points.

Now that you’ve got a good idea of how vector search works, it's time to see it in action. Start by initializing the Qdrant client.

import os

from dotenv import load_dotenv

from qdrant_client import QdrantClient

load_dotenv("./.env")

q_client = QdrantClient(

url=os.getenv('QDRANT_URL'),

api_key=os.getenv('QDRANT_API_KEY')

)Now, let’s get down to business and search for some vectors! Here’s what you need:

1. User Input: First, you need some text input. This could be anything from a search query to a random sentence.

2. Vectorize the Input: Next, you transform the input text into a vector embedding using the same embedding model you used when upserting into the collection. This transforms the text input into a point in the multidimensional vector space.

3. Qdrant for the heavy lifting: Now, use Qdrant to search vectors closest to our input vector.

4. Matchmaker, Matchmaker: You’ll get a list of vectors that best match the input text. These represent the most similar items in your collection.

First, a helper function must be defined to get the embedding representation of the input query.

from openai import OpenAI

openai_client = OpenAI()

def get_text_embedding(

text: str,

openai_client: OpenAI= openai_client,

model: str = "text-embedding-3-large") -> list:

"""

Get the vector representation of the input text using the specified OpenAI embedding model.

Args:

openai_client (OpenAI): An instance of the OpenAI client.

text (str): The input text to be embedded.

model (str, optional): The name of the OpenAI embedding model to use. Defaults to "text-embedding-3-large".

Returns:

list: The vector representation of the input text as a list of floats.

Raises:

OpenAIError: If an error occurs during the API call.

"""

try:

embedding = openai_client.embeddings.create(

input=text,

model=model

).data[0].embedding

return embedding

except openai_client.OpenAIError as e:

raise eBefore using the function defined below, it’s good to understand what gets returned when you search. Notice that I passed a list of keys for the payload I want to receive in the cell below. In the function, I set with_payload=True so it will return all the stuff in the payload.

q_client.search(

collection_name="arxiv_chunks",

query_vector=("summary" ,get_text_embedding("machine learning in sound and diffusion")),

with_payload=["summary", "title", "authors"],

limit=2

)This returns a list of the points like so:

[

ScoredPoint(

id='8aa3bc5d-7491-4917-b79c-4a10d05644e3',

version=0,

score=0.41306904,

payload={

'authors': [

'Dongchao Yang',

'Jianwei Yu',

'Helin Wang',

'Wen Wang',

'Chao Weng',

'Yuexian Zou',

'Dong Yu'

],

'summary':"..." #removed this for readability

'title': 'Diffsound: Discrete Diffusion Model for Text-to-sound Generation'

},

vector=None,

shard_key=None

)

]If your payload contains many keys, but you want to exclude only a couple, you can use PayloadSelectorExclude to explicitly state what you don’t want.

from qdrant_client import QdrantClient, models

exlusioner = models.PayloadSelectorExclude(exclude=["chunk", "text_id"])

q_client.search(

collection_name="arxiv_chunks",

query_vector=("summary" ,get_text_embedding("machine learning in sound and diffusion")),

with_payload=exlusioner,

limit=2

)You can also create more interesting and complex filters. This is useful when it’s impossible to express all the features of the object in the embedding. I recommend checking out the documentation for filters to understand your options. I’m sure we’ll make use of filtering as this series progresses.

Below, I’ve created a filter on the author field. The client should return the points where Dong Yu is one of the paper's authors.

author_filter = models.Filter(

should=[

models.FieldCondition(

key="authors",

match=models.MatchValue(value="Dong Yu")

)

])

q_client.search(

collection_name="arxiv_chunks",

query_vector=("summary", get_text_embedding("machine learning in sound and diffusion")),

query_filter=author_filter,

limit=5

)Other filtering clauses, like Must and Must Not, and filtering conditions, such as Match, Match Except, and Nested key, can be combined to form complex conditions. Again, I recommend checking out the documentation and hacking around.

Now, define a search function. You’ll see an argument named_vector_to_search, defining which vector you want to query against. Any other type of payload filtering can be passed as a keyword argument to this function.

def search(

named_vector_to_search: str,

input_query: str,

limit: int = 5,

client: QdrantClient = q_client,

collection_name: str = "arxiv_chunks",

**kwargs):

"""

Perform a vector search in the Qdrant database based on the input query.

This method takes an input query string, converts it into a vector embedding using the

"text-embedding-3-large" model, and searches for the closest matching vectors in the

Qdrant database. The search results are returned as a list of dictionaries containing

the item ID, similarity score, and payload information

Args:

input_query (str): The input query string to search for.

named_vector_to_search: the vector you want to search against

limit (int, optional): The maximum number of search results to return. Default is 3.

kwargs: Additional keyword arguments to pass to the Qdrant search method.

Returns:

list: A list of dictionaries representing the search results. Each dictionary contains

the following keys:

- "id": The ID of the matching item in the Qdrant database.

- "similarity_score": The similarity score between the input query and the matching item.

- metadata from the payload

"""

input_vector = get_text_embedding(input_query)

search_result = client.search(

collection_name=collection_name,

query_vector=(named_vector_to_search, input_vector),

limit=limit,

with_payload=True,

**kwargs

)

result = []

for item in search_result:

similarity_score = item.score

payload = item.payload

data = {

"similarity_score": similarity_score,

"summary": payload.get("summary"),

"title": payload.get("title"),

"source": payload.get("source"),

"authors": payload.get("authors")

}

result.append(data)

return result

QUERY_STRING = "agents, reasoning, chain-of-thought, few-shot prompting"

search(

named_vector_to_search= "summary",

input_query=QUERY_STRING

)This yields a list of similar points, which I will truncate to just one so you can see what the output looks like:

{'similarity_score': 0.58828723,

'summary': '...', #removed this for readability

'title': 'Text and Patterns: For Effective Chain of Thought, It Takes Two to Tango',

'source': 'http://arxiv.org/pdf/2209.07686',

'authors': ['Aman Madaan', 'Amir Yazdanbakhsh']}You can set the threshold for similarity by passing a value for score_threshold as a keyword argument.

search(

named_vector_to_search= "summary",

input_query=QUERY_STRING,

score_threshold=0.51

)That’s it for this one!

You’ve gotten but a glimpse of the power of vector search.

You’ve seen firsthand how you can use it to efficiently find similar points in a vast data collection by leveraging the vector representations of items. You’ve also understood how Qdrant simplifies this process by providing tools and algorithms like HNSW to navigate the high-dimensional vector space and retrieve the most relevant results. By understanding concepts like similarity metrics and approximate nearest neighbour search, you can harness the power of vector search to build applications that excel at semantic retrieval and similarity-based recommendations. Whether you’re working with text, images, or other data types, vector search opens up possibilities for creating intelligent and efficient context-augmented and context-aware systems.

There is much more ground to cover, and things will only get more interesting from here on out. I hope you’re as excited to learn about it as I am teaching you!

❤️ Support the Newsletter

If you found the newsletter helpful, please do me a favour and smash that like button!

You can also help by sharing this with your network (whether via re-stack, tweet, or LinkedIn post) so they can benefit from it, too.

🤗 This small gesture and your support mean far more to me than you can ever imagine!